Projected Gradient Descent for Max and Min Eigenpairs - Proof of Convergence

Let A be a \(n \times n\) positive semi-definite matrix. Let \(\lambda_1\geq \lambda_2 \geq \dots \geq \lambda_n\) be the eigenvalues in decreasing order and \(u_1,u_2,\dots,u_n\) be the corresponding orthonormal eigenvectors. We have

\[A = \sum_{i=1}^n \lambda_i u_i u_i^\top\]You need to find the Condition number of \(A\), which is defined as \(\lambda_1/\lambda_n\). You could compute all the eigenvalues and then compute the condition number. But this is superfluous as you just need to compute \(\lambda_1\) and \(\lambda_n\).

Almost two years ago, I wrote a blog post on using Projected Gradient Descent for finding the maximum and minimum eigenvectors and the corresponding eigenvalues. However, I did not offer any proof for the correctness of the algorithm. I do that in this blog post.

Maximum Eigenpair

Consider the following algorithm:

- \[x'_{t+1} = x_t + \eta A x_t = (I + \eta A)x_t\]

- \[x_{t+1} = \frac{x'_{t+1}}{\|x'_{t+1}\|_2}\]

This corresponds to doing projected gradient descent on the objective \(\min (-x^\top Ax)\) subject to \(x^\top x=1\).

Similarity to Power Iteration

Power Iteration is possibly the most widely used algorithm for computing the max eigenvector. Its update is:

\[b_{t+1}=\frac{A b_{t}}{\left\|A b_{t}\right\|} = \frac{A^{t+1} b_{0}}{\left\|A^{t+1} b_{0}\right\|}\]The PGD update could be written as:

\[x_{t+1} = \frac{(I + \eta A)x_t}{\|(I + \eta A)x_t\|_2} = \frac{(I + \eta A)^{t+1}x_0}{\|(I + \eta A)^{t+1}x_0\|_2}\]The PGD update is actually just power iteration on \(I+\eta A\). So we could try analyzing it like power iteration.

First note that \(A\) and \(I+\eta A\) have the same eigenvectors when \(\eta \geq 0\). The eigenvectors of \(I+\eta A\) are \(u_1, u_2, \dots, u_n\) and the corresponding eigenvalues are \(1+\eta \lambda_1 \geq 1+\eta \lambda_2 \geq \dots \geq 1+\eta \lambda_n\). So we know:

- \(A\) and \(I+\eta A\) have the same eigenvectors

- PGD on \(A\) is just power iteration on \(I+\eta A\)

- Power iteration converges to the maximum eigenvector

Hence, PGD will converge to the maximum eigenvector of A.

For a more rigorous proof, consider the following analysis:

If \(x_t^\top u_j \to 0\) for \(j \neq 1\), then \(x_t \to u_1\). We have:

\[\begin{align} u_j^\top x_t &= \frac{u_j^\top (I+\eta A)^t x_0}{\|(I+\eta A)^t x_0\|}\\ &= \frac{u_{j}^{\top} \sum_{i=1}^{n}\left(1+\eta \lambda_{i}\right)^{t} u_{i} u_{i}^{\top} x_{0}}{\left\|\sum_{i=1}^{n}\left(1+\eta \lambda_{i}\right)^{t} u_{i} u_{i}^{\top} x_{0}\right\|}\\ &= \frac{ \left(1+\eta \lambda_{j}\right)^{t} u_{j}^{\top} x_{0}}{\left\|\sum_{i=1}^{n}\left(1+\eta \lambda_{i}\right)^{t} u_{i} u_{i}^{\top} x_{0}\right\|}\\ &= \frac{ \left(1+\eta \lambda_{j}\right)^{t} u_{j}^{\top} x_{0}}{\left(\sum_{i=1}^{n}\left(1+\eta \lambda_{i}\right)^{2t} (u_{i}^{\top} x_{0})^2\right)^{1/2}}\\ &\leq \left(\frac{1+\eta \lambda_{j}}{1+\eta \lambda_{1}}\right)^{t} \frac{u_{j}^{T} x_{0}}{u_{1}^{T} x_{0}} \end{align}\]When \(j\neq 1\), \(x_t^\top u_j \to 0\) so \(x_t \to u_1\). Hence, The sequence \(x_t^\top A x_t\) converges to \(\lambda_1\).

Minimum Eigenpair

The minimum eigenvalue of \(A\) is the inverse of the largest eigenvalue of \(A^{-1}\). We can run power iteration on \(A^{-1}\) to compute the minimum eigenvalue. But we must compute \(A^{-1}\), which is an overhead.

I proposed PGD on the objective \(\min (x^\top Ax)\) subject to \(x^\top x=1\). This avoids computing the inverse. The update for this will be:

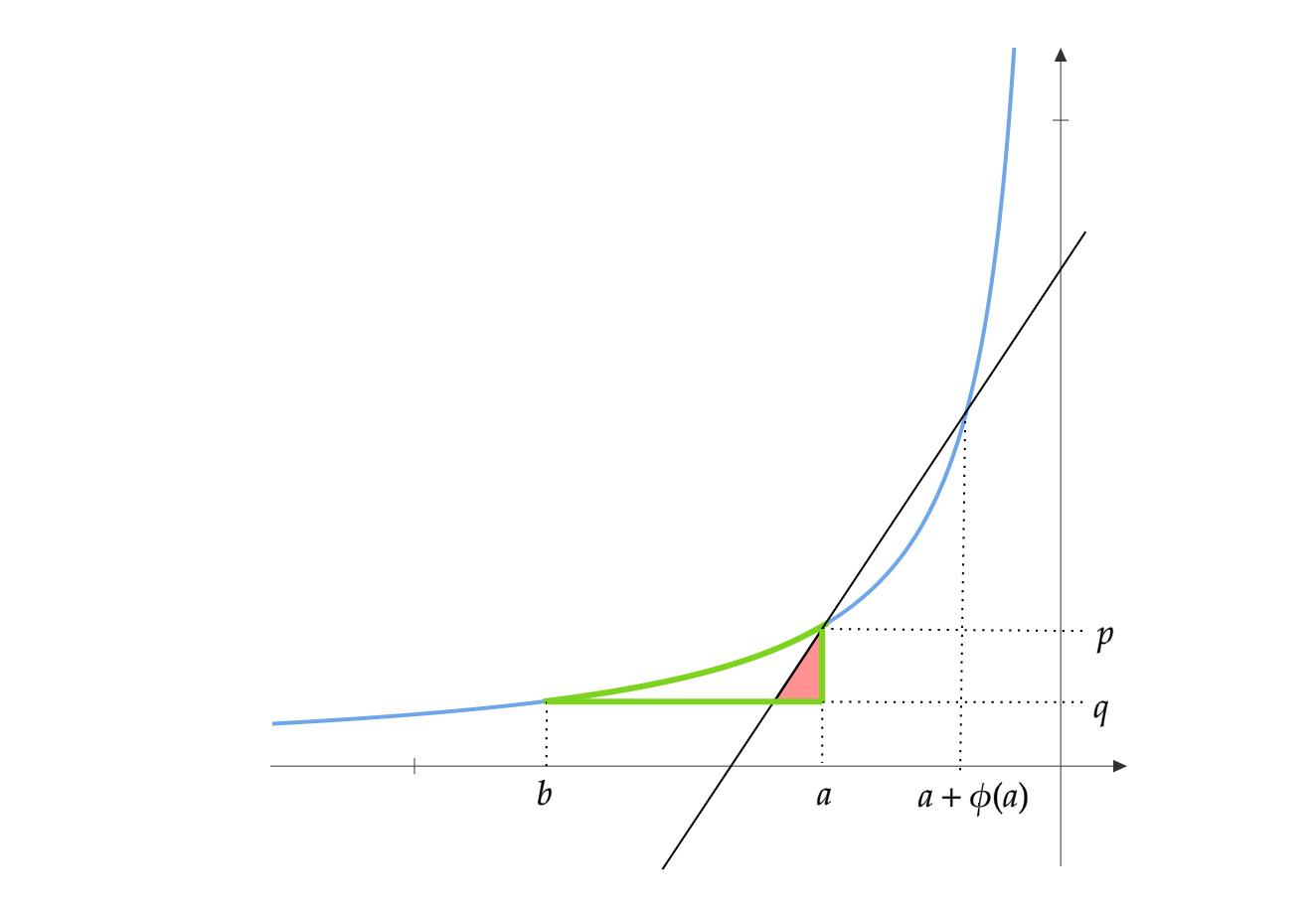

\[x_{t+1} = \frac{(I - \eta A)x_t}{\|(I - \eta A)x_t\|_2} = \frac{(I - \eta A)^{t+1}x_0}{\|(I - \eta A)^{t+1}x_0\|_2}\]Choose \(\eta < 1/\lambda_1\). Note that \(A\) and \(I - \eta A\) have the same eigenvectors. The eigenvalues corresponding to \(u_1, u_2, \dots ,u_n\) are \(1-\eta \lambda_1\leq 1-\eta \lambda_2\leq \dots \leq 1-\eta \lambda_n\). So power iteration on \(I - \eta A\) will converge to \(u_n\) and \(x_t^\top A x_t\) will converge to \(\lambda_n\).

To compute the condition number, first find the largest eigenvalue \(\lambda_1\) by using PGD. Then choose \(\eta < 1/\lambda_1\) and compute the smallest eigenvalue \(\lambda_n\) by using PGD. Compute the ratio \(\lambda_1/\lambda_n\).