Solving a Non-Convex problem by solving many Convex problems

Recall the Babylonian method for computing square roots, which you might have learnt in highschool. I propose a higher dimensional variant of that, which I use to solve a few Non-Convex problems, specifically computing Matrix Square root, Positive Semidefinite matrix completion and Euclidean Distance Matrix completion.

Babylonian method

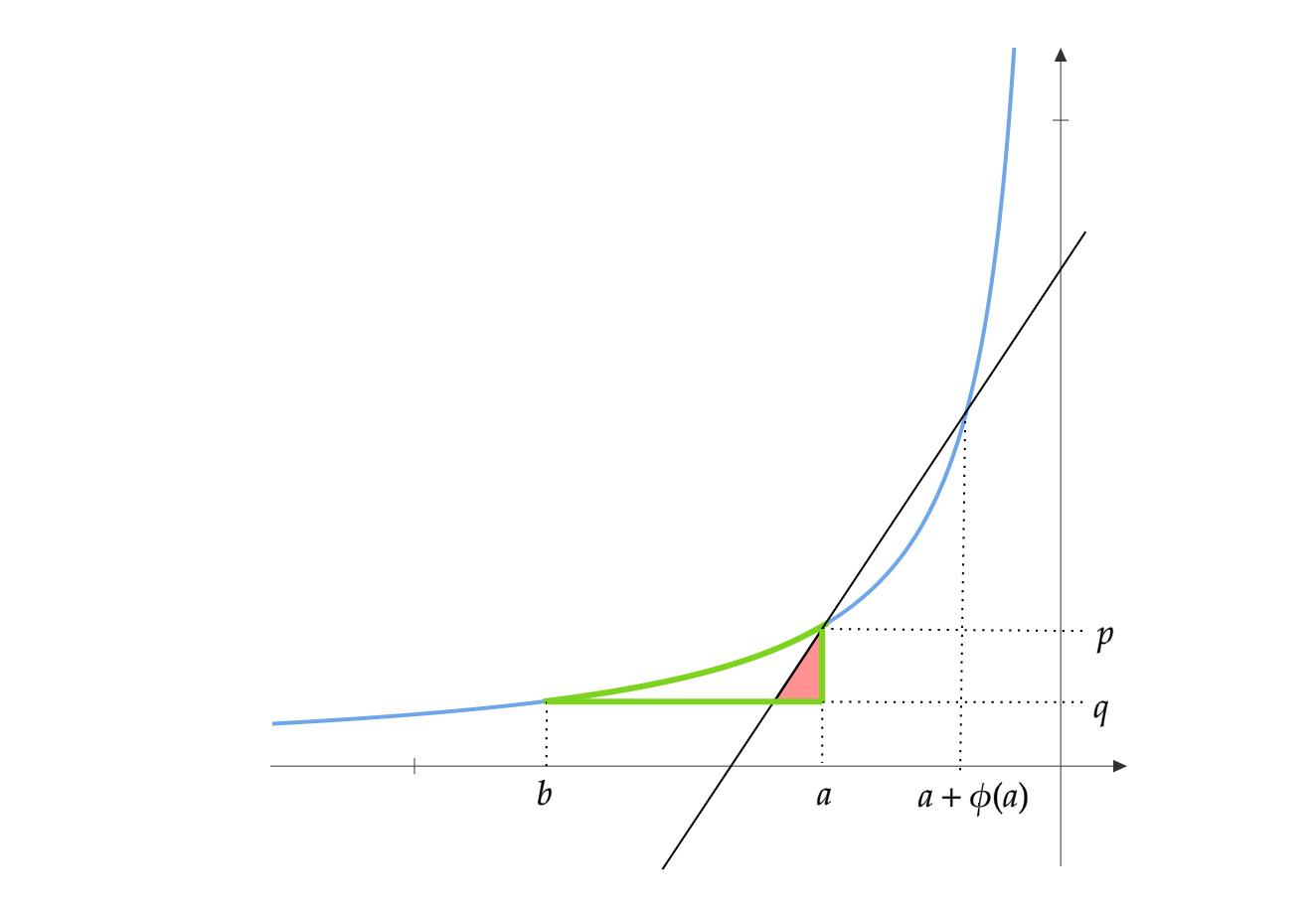

It is an iterative procedure for computing the square root of a given real number. Say we need to compute the square root of a \(a\). The first iterate \(x_0\) can be initialized to a random positive real number. The iterations proceed as:

\[x_{t} = \frac{1}{2}\bigg(x_{t-1} + \frac{a}{x_{t-1}}\bigg)\]As \(t \to \infty\), \(x_t \to \sqrt{a}\) at a quadratic rate.

Here is my interpretation of this procedure. Define the function \(f(x,y) = xy\). Let \(y_t = \arg \min_y (a - f(x_t,y))^2\). Under this interpretation, the iterations proceed as:

\[x_{t} = \frac{x_{t-1} + y_{t-1}}{2} = \frac{x_{t-1} + \arg \min_y (a - f(x_{t-1},y))^2}{2}\]To see why this is true, note that \((a-x_ty)^2\) is minimized at \(y = a/x_t\). So we get back the original Babylonian method.

Matrix Square Root

Let \(X^*\) be an unknown \(n \times n\) PSD matrix of rank \(k\). Let \(M^* = X^*X^*\). The goal here is to find \(X^*\) given \(M^*\). Trying to minimize the following objective function would not work as it is non-convex\(^1\).

\[\|M^* -XX\|_F^2\]Given \(M^*\), we can determine its rank \(k\). We initialize our initial estimate \(X_0\) to be a random PSD matrix of rank \(k\). This can be obtained by generating a random \(n \times k\) matrix and letting \(X_0\) be its gram matrix. Since matrix multiplication is not commutative, defining the function as \(f(X,Y) = XY\) would not work.

We define it as \(f(X,Y) = \frac{1}{2}(XY+YX)\), so that \(f(X,Y) = f(Y,X)\). The iterations proceed as:

\[\begin{align} Y_{t-1} &= \arg \min_{Y} \|M^* - f(X_{t-1},Y)\|_F^2 \\ &= \arg \min _Y\|2M^* - X_{t-1}Y -YX_{t-1}\|_F^2\\ X_t &= \frac{1}{2}(X_{t-1}+Y_{t-1}) \end{align}\]The subproblem \(Y_{t-1} = \arg \min_{Y} \|2M^* - X_{t-1}Y -YX_{t-1}\|_F^2\) can be solved easily as \(\|2M^* - XY -YX\|_F^2\) is convex in \(Y\). Any method for solving convex problems would give us the optimal \(Y\)

This heuristic has worked pretty well in practise. My claim is that as \(t \to \infty\), \(X_tX_t \to M^*\) at a quadratic rate.

Positive Semidefinite Matrix completion

Let \(X^*\) be an unknown \(n \times k\). Let \(G^* = X^*{X^*}^T\). Assume \(G^*\) is incoherent. Entries of \(G^*\) are sampled according to some probability \(p\)(which depends on the incoherence parameter and a few other parameters). The goal here is to find \(X^*\) given only the sampled entries of \(G^*\). Let \(\Omega\) be the binary sampling matrix. Trying to minimize the following objective function would not work as it is non-convex\(^2\).

\[\|\Omega \circ (G^* -XX^T)\|_F^2\]We initialize our initial estimate \(X_0\) to be a random \(n \times k\) matrix. We define \(f(X,Y) = \frac{1}{2}(XY^T+YX^T)\), so that \(f(X,Y) = f(Y,X)\). The iterations proceed as:

\[\begin{align} Y_{t-1} &= \arg \min_{Y} \|\Omega \circ (G^* - f(X_{t-1},Y))\|_F^2 \\ &= \arg \min_{Y}\|\Omega \circ (2G^* - X_{t-1}Y^T -YX_{t-1}^T)\|_F^2\\ X_t &= \frac{1}{2}(X_{t-1}+Y_{t-1}) \end{align}\]Similar to the previous case, the subproblem is convex in \(Y\) and can be solved efficiently. This heuristic has also worked pretty well in practise. Similarly, my claim is that as \(t \to \infty\), \(X_tX_t^T \to G^*\) at a quadratic rate.

Euclidean Distance Matrix completion

Let \(D^*\) be the EDM produced by an unknown matrix \(X^* \in \mathbb{R}^{n\times k}\). The entries of \(D^*\) are the squared Euclidean distances between the rows of \(X^*\). Let \(\mathcal{K}(A)\) be the following function on square matrices

\[\mathcal{K}(A) = \text{diag}(A)\mathbb{1}+\mathbb{1}\text{diag}(A) - A - A^T\]Here \(\text{diag}(A)\) is the diagonal matrix of \(A\) and \(\mathbb{1}\) is the matrix of all \(1\)s.

Given \(X^*\), the EDM \(D^*\) can be computed using the following equation.

\[D^* = \mathcal{K}(X^*{X^*}^T) = \text{diag}(X^*{X^*}^T)\mathbb{1}+\mathbb{1}\text{diag}(X^*{X^*}^T) - 2 X^*{X^*}^T\]Similar to the PSD completion problem, entries of \(D^*\) are sampled according to some probability \(p\). The goal is to find \(X^*\) given only the sampled entries of \(D^*\). Let \(\Omega\) be the binary sampling matrix. Trying to minimize the following objective function would not work as it is non-convex\(^3\).

\[\|\Omega \circ (D^* - \mathcal{K}(XX^T))\|_F^2\]Because of the way we defined \(\mathcal{K}\),

\[\mathcal{K}(XY^T) = \mathcal{K}(YX^T) = \mathcal{K}(\frac{1}{2}(XY^T+YX^T))\]We initialize our initial estimate \(X_0\) to be a random \(n \times k\) matrix. The iterations proceed as:

\[\begin{align} Y_{t-1} &= \arg \min_{Y} \|\Omega \circ (D^* - \mathcal{K}(X_{t-1}Y^T))\|_F^2 \\ &= \arg \min_{Y}\|\Omega \circ (D^* - (\text{diag}(X_{t-1}Y^T)\mathbb{1}+\mathbb{1}\text{diag}(X_{t-1}Y^T) - X_{t-1}Y^T - YX_{t-1}^T))\|_F^2\\ X_t &= \frac{1}{2}(X_{t-1}+Y_{t-1}) \end{align}\]Again, subproblem is convex in \(Y\) and can be solved efficiently. This heuristic has also worked pretty well in practise. Again, my claim is that as \(t \to \infty\), \(\mathcal{K}(X_tX_t^T) \to D^*\) at a quadratic rate.

I have run several thousands of experiments for all the three problems, and this method has always worked. Why does this method work? Does this method work for other non convex problems? For what type of non-convex problems does this method work? All these are open problems and I would love to have some feedback.

\(^1\) Prateek Jain et.al. give a gradient descent method for computing matrix square root : Computing Matrix Squareroot via Non Convex Local Search.\(^\#\)

\(^2\) It has been proved that PSD matrix completion has no spurious local minima. See these papers from NIPS 2016 and ICML 2017. So gradient descent methods would also work for this problem. \(^\#\)

\(^3\) I strongly believe that EDM completion has no spurious local minima. See my previous blog post about this. In practise, gradient descent has worked for EDM completion.\(^\#\)

\(^\#\) Compared to gradient descent methods, my algorithm converges in fewer iterations, but each iteration takes more time. Gradient descent takes more iterations to converge, but each iteration is fast. When \(n\) is very large, gradient descent wins as solving the convex subproblem at each iteration becomes very expensive.